Mind-controlled motion

We have recently discovered a visual illusion in which sequences of purely random textures are perceived as having coherent, repetitive, apparent motion. If you look at the random pixel array, do you see up and down motion, or side to side motion? In fact, the only motion is what you construct in your mind! How and why do such illusory percepts arise? And what do they tell us about our motion processing system and its sensitivity to attention and expectations? Read more...

A 1-minute video about Mind-controlled-motion was selected as a Top-10 finalist in 2015's Best Illusion of the Year Contest.

Face Representation and Recognition

Most of us are experts at recognizing faces, but how do we encode and represent the subtle information needed to distinguish among the thousands of faces we know? We are developing parametric models of faces to study how we remember typical and atypical faces, how we adapt to facial characteristics such as gender and race, and how we might use simple drawings as a tool for people to recall facial information.

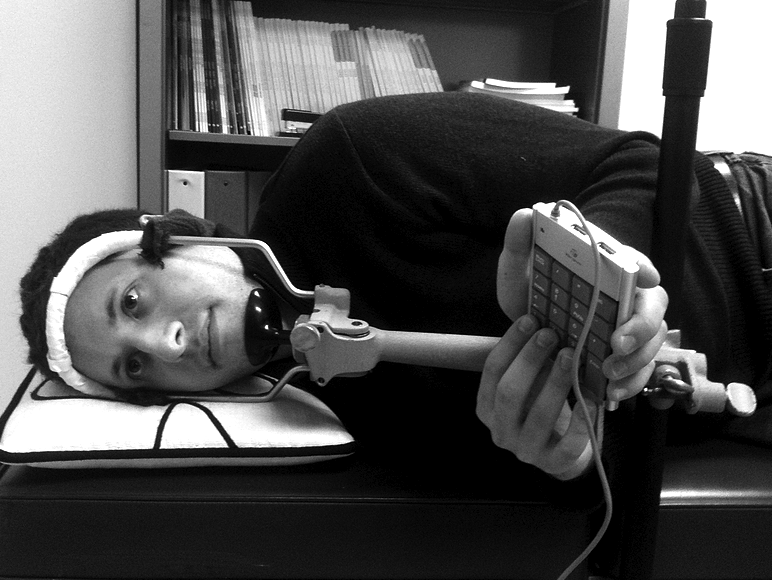

Spatial Orientation in Reality and Virtual Reality

Many visual domains are sensitive to orientation. For example, we are much better at perceiving faces or reading text when these stimuli are upright relative to other orientations. But what reference frame(s) do we use to determine which way is up? In previous work, we have shown that orientation relative to both internal (e.g. retinal) and external (e.g. visual-environmental) reference frames influences face recognition performance. We are interested in further examining whether and how we combine proprioceptive, vestibular, and visual cues to process orientation-dependent stimuli. Using the Oculus Rift (DK2), we can create virtual environments to independently manipulate different cues to orientation.

Face Drawing

Led by Jennifer Day

Using a parameterized face space (based on Davidenko, 2007) we are examining the ability of people to draw faces accurately. The parameterization not only simplifies the inherent complexity of faces but also provides a direct measure of drawing accuracy. So far, our data suggest that people can draw upright faces more accurately than inverted faces, suggesting holistic processing enhances drawing.